Evolution of laparoscopic education and the laparoscopic learning curve: a review of the literature

Introduction

Laparoscopic surgery offers innumerable advantages to patients, including reduced pain, shortened length of hospital stay, and decreased adhesion formation (1-3). Over the past decades, laparoscopic surgery has been used for more varied and increasingly complex operations, allowing more and more surgical patients to experience the benefits of minimally invasive surgery (MIS) (4-6). However, trainees have a significant learning curve associated with performing laparoscopic surgery (7). This problem is magnified for more complex laparoscopic operations and novel teaching approaches are necessary to prepare the next generation of surgeons for independent practice.

The surgical “learning curve” was described by Tyras and colleagues over 40 years ago with regard to the discovery that outcomes following internal mammary artery coronary artery bypass grafting improved after the first two years of experience (8). This concept has since been applied to innumerable procedures and operations in medicine and surgery. In surgery, the learning curve has been defined as “the amount of procedural training required for a surgeon to achieve competence in a new procedure” (9). Authors have aimed to define timelines of learning curves with strategies to shorten or “flatten” the learning curve to expedite training. Others have highlighted ethical conundrums associated with learning curves in surgery given that poor outcomes may be seen when a surgeon has yet to achieve proficiency (10). Indeed, as advanced laparoscopy has become widespread, establishing a level of proficiency has become more important than ever.

This narrative literature review aims to examine current laparoscopic surgical training and the laparoscopic learning curve. We also aim to discuss methods and future directions that hold promise for shortening the laparoscopic learning curve. We present the following article in accordance with the Narrative Review reporting checklist (available at https://ls.amegroups.com/article/view/10.21037/ls-22-29/rc).

Methods

A literature search was performed through the MEDLINE database (Table 1). All article publication years and all study designs were considered for English-language studies. The search terms included “surgical learning curve”, “laparoscopic learning curve”, “laparoscopic education”, and “laparoscopic simulation”. Hand searches of the references of retrieved literature were performed. The results were reviewed until thematic saturation was reached.

Table 1

| Items | Specification |

|---|---|

| Date of search | January 13, 2022 |

| Databases and other sources searched | MEDLINE |

| Search terms used | Surgical learning curve, laparoscopic learning curve, laparoscopic education, laparoscopic simulation |

| Timeframe | All dates considered |

| Selection process | Abstract review to assess for relevance to laparoscopic education and the laparoscopic learning curve |

| Any additional considerations | Hand searches of the references of retrieved literature were performed. Results were reviewed until thematic saturation was reached |

Results and discussion

Current laparoscopic surgical training

Traditionally, the majority of surgical training has been performed in the operating room on patients. The Accreditation Council for Graduate Medical Education (ACGME) has defined minimums for general surgery residents’ graduate case logs, which include at least 100 basic laparoscopic cases and at least 75 complex laparoscopic cases (11). Most authors define basic laparoscopy to include diagnostic laparoscopy, laparoscopic cholecystectomy, and laparoscopic appendectomy; complex laparoscopy comprises all other laparoscopic procedures (12). Many trainees’ first laparoscopic experience is holding the laparoscope and observing during laparoscopic cases (13). With each year of residency training, trainees are granted increasing autonomy, first on basic laparoscopic procedures and later on more advanced laparoscopic procedures (14). Unfortunately, graduating general surgery residents achieve meaningful autonomy in only a small subset of laparoscopic procedures, possibly related to a shift in culture, concerns about safety and supervision, changes in work schedules, and a lack of universal standards (14). Many finish residency not yet prepared for independent practice in a wide range of laparoscopic procedures (15). In this setting, graduating residents have increasingly pursued additional laparoscopic training through an MIS Fellowship (16).

Acknowledgment of the limitations of teaching surgical skills only in the operating room on actual patients has led to the rapid expansion of simulation. Simulation allows trainees to learn in a low-risk environment, engage in opportunities for feedback and assessment, and receive standardized technical skill training that is not always possible through learning done solely in the clinical environment (17). In addition, simulation-based training can boost surgery residents’ experience via repetitions when there is an insufficient case volume, as some skills simply require a large number of trials with feedback to achieve proficiency (18). Laparoscopic simulation curricula encompass both basic and advanced laparoscopic skills and curricula can be performed on box trainers (BT) or virtual reality (VR) simulators (19-21). Numerous BT and VR simulators have been developed to provide training transferable to the operating room. BTs have been developed by multiple companies, institutions, and individuals, and generally involve a sealed or partially sealed box with holes and a camera. VR simulators include LapSim (Surgical Science, Inc., Göteborg, Sweden) and Lap MentorTM (3D Systems, Inc., Simbionix, Airport City, Israel), among others (22). Multiple studies have compared BT and VR simulators with no clearly superior modality (23). Newer VR simulators have piloted an “immersive” simulation with 360-degree views of an operating room; while not exact replicas of the operative experience, these new immersive simulations have shown promise to prepare trainees for several of the real-life challenges of being in the operating room (24).

Beyond BT and VR simulators, animal and cadaveric models have been employed to provide realistic, tissue-based simulation training (25). These models have been performed in both in vivo and ex vivo scenarios (22,26). Multiple studies have shown tissue-based models to have validity and to prepare trainees for the operating room (27,28). Limitations of animal-based models include varied anatomy and ethical issues, while cadaveric models are generally limited by lack of perfusion and distorted tissue handling (29). Advanced perfused cadaveric models have high fidelity but at increased cost and logistic difficulties (26).

Many basic curricula center on the Fundamentals of Laparoscopic Surgery (FLS) tasks, which were created to develop a simulation program that did not require the use of tissue. These tasks were relatively inexpensive, used laparoscopic instruments also used in the operating room, and were sufficiently flexible to add additional exercises; the tasks were “not specifically designed to simulate a specific surgical operation” but rather to provide “fundamental training for most laparoscopic skills used in the majority of surgical operations” (30). FLS is a standardized approach to teaching the “knowledge and technical skills required in basic laparoscopic surgery” and has been adopted by the American Board of Surgery as a board certification requirement (31).

In addition to basic laparoscopic simulation curricula, several more advanced laparoscopic simulation curricula have also been developed. One successful curriculum, advanced training in laparoscopic abdominal surgery (ATLAS) modified tasks from FLS to create challenging, more realistic simulations (32). ATLAS involves tasks such as intracorporeal suturing at an angle and in different orientations. A second curriculum that addresses the training gap in advanced laparoscopic simulation was developed in Santiago, Chile, and uses a jejunojejunostomy model with graduated tasks to facilitate skill acquisition. Trainees who completed the course using the advanced trainer performed better than practicing general surgeons who had not completed the course on final assessment (33). Investigators also found a high correlation between this simulation and the actual operation (28).

In these and other laparoscopic curricula, remote and home-based simulation training models have also gained prominence to allow trainees to learn laparoscopic skills at flexible times and locations. For example, a mobile application (“Lapp”) was developed to connect learners using simulators at remote sites to instructors for the jejunojejunostomy model (34). Remote instruction through Lapp was shown to be similarly effective to in-person feedback and allows for asynchronous feedback, thus decreasing demands on faculty time. Video-based explanations in the application further reduced the need for instructor feedback (35). This type of remote learning became more widespread with the coronavirus pandemic (36,37). As a result, learners have become more accustomed to remote learning, and off-site training offers an accessible option for laparoscopic skills acquisition from anywhere (38).

Feedback is a vital aspect of intraoperative and simulation-based skills acquisition. Research indicates that a lack of feedback limits trainees’ ability to refine critical skills. A qualitative study investigating barriers to laparoscopic home simulation found that sub-optimal feedback prevented trainees from getting the most out of home training (39). The scoping review recommended that trainees undergoing off-site training use affordable, portable BTs and participate in a curriculum with clear learning objectives and feedback. The authors further note that “simply equipping trainees with a BT and relying on them to practice without an overall training structure is an antiquated strategy and should be abandoned”. One way to increase feedback is to implement a formative feedback tool for laparoscopic skills (40). Such tools can prepare trainees to engage in deliberate practice and improve skills more efficiently. Another way is to include peer feedback as part of the curriculum. There have been numerous purported theoretical advantages to incorporating peer feedback into medical education, including reducing teaching pressure on faculty, creating a comfortable learning environment, and increasing learner motivation (41). Strong evidence exists supporting the effectiveness of peer-assisted learning through the social and cognitive congruence among peers (42-44).

Laparoscopic training has expanded from the operating room to the simulation center and now includes remote simulation using varied basic and advanced laparoscopic curricula. The training aims to prepare surgical residents and fellows to perform a broadening range of laparoscopic procedures safely.

Establishing the learning curve

To quantify the progress of a surgeon’s training, many authors have sought to define laparoscopic procedures’ learning curve. Prior authors have cited this as the “magic number” of cases, which signifies “the number of cases required to reach stability or technical competence” (5). This number will vary widely by surgeon based on the surgeon’s prior experience in related open operations, unrelated MIS operations, and simulation (5). Learning may occur more quickly with mentorship, coaching, and multidisciplinary collaborations (45,46). Furthermore, the “magic number” may also be different for similar operations performed for different indications. For example, different types of adrenal tumors may present different challenges for laparoscopic resections (6). Some authors have attempted to address this by defining learning curves for both simple and complex cases of a particular type of operation (47). It may also be prudent to define learning curves for different parts of a single procedure. Indeed, the number of cases required to achieve proficiency for one part of a complex case may differ from the number of cases for another part of the same procedure. Reaching a consensus on the phases of the laparoscopic surgery learning curve may help quantify surgeons’ progression through training and determine readiness for independent operating.

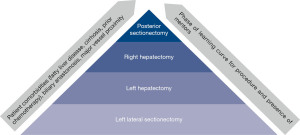

As such, determining the factors by which to set the standard for a learning curve becomes important. From a theoretical standpoint, Bloom’s taxonomy can be used to understand laparoscopic learning curves (48). This taxonomy contains six levels of learning, from the most basic (remembering) to the most complex (creating). In this framework, surgeons would first remember the steps of an operation and understand the purpose of each step. They would then apply those steps to perform an operation. After the operation, surgeons would analyze their results and evaluate their method to determine any improvements to their technique. Finally, they may create novel approaches to address challenges with the existing technique. While a useful model for understanding how a surgeon progresses along a learning curve cognitively, this is challenging to quantify rigorously and is not generally done. Resultantly, authors have evaluated measurable factors such as operative time, post-operative length of stay, estimated blood loss, conversion to an open procedure, post-operative complications, cancer recurrence, hospital readmission, and mortality when evaluating various procedures’ learning curves (5,6,49-56). Still others have assessed laparoscopic performance and determined a learning curve on laparoscopic simulators equipped with force, motion, and time trackers (57). These have been shown to reflect trainee competence and correlate with other skill assessments. Among these many methods for determining the learning curve, operative time is very commonly reported as it is easily measured and pulled from the electronic medical record. However, operating time may not be the most reflective of the laparoscopic learning curve given case variability. Surgeons who have more experience may perform more complicated operations laparoscopically; for example, a hepatobiliary surgeon with a high level of experience may be more likely to resect a posterior liver tumor with vascular involvement in an anatomically complex patient with cirrhosis and/or obesity than a less experienced surgeon limited to resecting straightforward left lateral tumors in healthy patients (58). Furthermore, operations that are still in the infancy of their development, further discussed below, may have longer learning curves. The increased time associated with the more involved or newer operation, which may be generalized as a “laparoscopic liver resection”, reflects increased case complexity rather than decreased operator proficiency (Figure 1).

Regardless of the factors chosen when determining the learning curve, cumulative sum analysis (CUSUM) has been commonly used to report the learning curve by describing the “plateau” point of the chosen factor (49,52,56,59-61). Page developed CUSUM in 1954 for industrial problems. Subsequently, CUSUM was adapted for monitoring surgical performance first by Williams in 1992 with subsequent adaptations as described by Steiner et al. in 2000 (62). CUSUM allows for performance assessment by detecting changes in performance over time, which makes it useful for learning curve analysis. However, there are limits to this interpretation of the learning curve. Peláez and colleagues conducted a study assessing “curve stabilization” in novices and experts (53). They found that although both novices and experts experienced curve stabilization, this occurred at a lower overall point for novices than experts, suggesting that performance stability may not indicate performance proficiency. In addition, many descriptions of the number of cases required to plateau are based on a single surgeon’s experience, which could be skewed based on that surgeon’s experiences as described above (50,61). When a larger sample of surgeons is used to determine the learning curve, there is generally a longer reported curve (5).

Certain factors have been shown to not have any learning curve period for some operations. For example, one study found that while operative time decreased with increasing experience for single-incision laparoscopic appendectomy, complications remained stable (51). As another study notes, “the definition of the learning curve itself is not very objective, but it is based on arbitrarily chosen parameters” (55). The learning curve remains a fraught concept, which is a major barrier to defining parameters for assessing the safety of surgeons’ independently performing complex laparoscopic operations. As such, case numbers and learning curve analyses may be best suited for formative, rather than evaluative purposes.

Shortening the learning curve

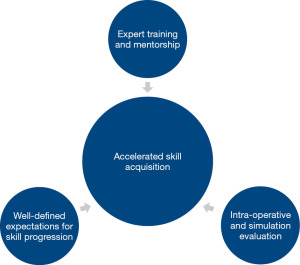

Regardless of the difficulties in defining learning curves for laparoscopy, it is important to identify factors that accelerate laparoscopic surgical training or “shorten the learning curve”. Given the differences in instrumentation and technique compared with open surgery, there are significant challenges associated with laparoscopic training that are further magnified by increasing case complexity. Therefore, an effective surgical training program must include a number of essential pieces to facilitate laparoscopic skill acquisition: expert training and mentorship, well-defined expectations for skill progression, and intra-operative evaluation (Figure 2).

Training and mentorship by expert-level surgeons

Studies seeking to shorten the learning curve and expedite training have defined distinct phases of surgical learning: Initiation, Standardization, and Proficiency (ISP model) (63). Published data on minimally invasive hepatectomy define the learning curve’s three phases according to the number of cases performed. Different learning curves exist among different generations of laparoscopic surgeons. For example, in a 2019 study by Halls et al., pioneer surgeons performing laparoscopic liver surgery (LLS) progressed through the ISP phases for cases 1 through 50, cases 51 through 135, and cases after 135, respectively. In contrast, the next generation of surgeons performing LLS, labeled “early adopters”, progressed more expeditiously through the learning curve phases (first 17 cases, cases 18 through 46, and cases after 46, respectively) (59). These differences are likely due to the expanding pool of expert surgeons to teach skills to others after the “pioneer” phase, as well as the increased clarity of indications and the stability of the operative platform. This highlights the ability to shorten the phases of the surgical learning curve through training and mentoring via a master-apprentice model, e.g., early introduction to laparoscopic training in residency with structured mentorship from expert laparoscopic surgeons. Experts from the “pioneer” phase can provide tailored feedback to their successors based on prior experienced errors. Ideally, these experts would receive training in operative coaching to better refine learners’ skills (64). For highly complex cases, further mastery can be achieved through completion of a dedicated fellowship.

Defining expectations for skill progression

Conclusions from the 2015 Morioka and 2017 Southampton European Guidelines Meeting for Laparoscopic Liver Surgery (EGMLLS) emphasize that safe expansion of LLS requires a stepwise progression in training, specifically via incremental increases in technical difficulty and complexity of cases performed (65,66). Existing difficulty scoring systems such as the IWATE-score and Halls score include tumor and resection data while neglecting patient factors that contribute to case complexity (58,67-69).

Halls et al. queried international laparoscopic liver surgeons to identify elements that inform the difficulty of a laparoscopic hepatectomy and found that elevated body mass index, neoadjuvant chemotherapy, prior open abdominal surgery, and concurrent procedures added moderate difficulty. In contrast, maximal difficulty was attributed to cases with proximity to major hilar structures or patients with prior liver resections (58). Authors have demonstrated expedited skill acquisition and safety with stepwise introduction of LLS (70), yet there remains a need for novel classification systems that incorporate the aforementioned patient factors to better guide stepwise training and selection of procedures appropriate to level of surgical skill as discussed in the prior section.

Validation of existing and novel scoring systems, followed by expert consensus on the appropriate stepwise progression of training based on difficulty rating, can inform a standardized approach to laparoscopic skill progression that is replicable across institutions. This standardized approach can assist with learner feedback, case selection, and evaluation.

Evaluation of performance in the operating room and simulation setting

Early introduction to MIS training ideally begins in the simulation lab with gradual transition to actual operative cases. Unfortunately, standardized objective evaluation tools remain to be widely adopted for feedback in the operating room.

The Objective Structured Assessment of Technical Skills (OSATS) has been used since the 1990s with high reliability and construct validity for bench model simulations including those for laparoscopic surgery (71,72). A 2013 study demonstrated the efficacy of OSATS in evaluating surgical trainees’ performance during live open and laparoscopic operations at a single institution in Japan (73). Specifically for laparoscopic surgery, the Global Operative Assessment of Laparoscopic Skills (GOALS) was developed in 2005 to evaluate five domains of surgery: depth perception, bimanual dexterity, efficiency, tissue handling, and autonomy (74). Initially validated for laparoscopic cholecystectomy and appendectomy, GOALS has been more recently validated as an assessment tool for more complex laparoscopic operations (74-76).

OSATS and GOALS are useful for formative assessment but lack procedure specificity and cut-off scores for examination or credentialing (77,78). As a result, procedure-based assessments (PBAs) have been developed to measure performance on key technical steps of a specific procedure based on objective measures such as scale of independence (79). PBAs have been effective at discriminating between all skill levels and assessing independent operating capability for laparoscopic cholecystectomy among trainees (80). PBAs remain to be developed and validated for other simple and complex laparoscopic procedures.

Other objective methods of assessing technical skills include dexterity analysis systems (81) and assessments through VR simulators (82,83). These scoring tools primarily evaluate technical performance and do not reflect non-technical skills such as communication, decision making, and leadership, which are key to operative mastery. As such, additional evaluation methods will be required for more comprehensive feedback.

Overall, these three methods of improving laparoscopic training—expert training and mentorship, well-defined expectations for skill progression, and intra-operative evaluation—may have a role in shortening the laparoscopic learning curve. Concern about the heterogeneity of time-based training has prompted renewed interest in competency-based curricula for surgical trainees (84). Competency-based education, defined as “an outcome-based approach to education”, has started to take root in surgical education in programs like FLS and ACGME milestones (84). Prior authors have published competency-based programs for training in laparoscopic surgery, which build on the evaluation tools discussed above (85). As surgical training programs across the country and world emphasize competency-based training, establishing efficient and accessible laparoscopic training programs becomes more important.

Conclusions

Defining learning curves is challenging and can be arbitrary. Nonetheless, as the complexity of laparoscopy continues to evolve, improving laparoscopic education and confirming provider competency is increasingly important to ensure patient safety. Several things can contribute to current laparoscopic surgical training, including expert training and mentorship, well-defined expectations for skill progression, and intra-operative and simulation evaluation. Incorporating these components into laparoscopic training can accelerate skill acquisition to “shorten the learning curve” and improve trainee competence in a safe and low-stakes environment.

Acknowledgments

Funding: None.

Footnote

Provenance and Peer Review: This article was commissioned by the Guest Editors (Allan Tsung and Aslam Ejaz) for the series “Implementation of New Technology/Innovation in Surgery” published in Laparoscopic Surgery. The article has undergone external peer review.

Reporting Checklist: The authors have completed the Narrative Review reporting checklist. Available at https://ls.amegroups.com/article/view/10.21037/ls-22-29/rc

Peer Review File: Available at https://ls.amegroups.com/article/view/10.21037/ls-22-29/prf

Conflicts of Interest: All authors have completed the ICMJE uniform disclosure form (available at https://ls.amegroups.com/article/view/10.21037/ls-22-29/coif). The series “Implementation of New Technology/Innovation in Surgery” was commissioned by the editorial office without any funding or sponsorship. RB is a surgical research resident who is funded through the “Intuitive Surgical - University of California, San Francisco Simulation-Based Surgical Education Research Fellowship”. This funding began after the initial writing of this manuscript. No part of this manuscript was reviewed by anyone from Intuitive Surgical. The authors have no other conflicts of interest to declare.

Ethical Statement: The authors are accountable for all aspects of the work in ensuring that questions related to the accuracy or integrity of any part of the work are appropriately investigated and resolved.

Open Access Statement: This is an Open Access article distributed in accordance with the Creative Commons Attribution-NonCommercial-NoDerivs 4.0 International License (CC BY-NC-ND 4.0), which permits the non-commercial replication and distribution of the article with the strict proviso that no changes or edits are made and the original work is properly cited (including links to both the formal publication through the relevant DOI and the license). See: https://creativecommons.org/licenses/by-nc-nd/4.0/.

References

- Bates AT, Divino C. Laparoscopic surgery in the elderly: a review of the literature. Aging Dis 2015;6:149-55. [Crossref] [PubMed]

- Arezzo A. The past, the present, and the future of minimally invasive therapy in laparoscopic surgery: a review and speculative outlook. Minim Invasive Ther Allied Technol 2014;23:253-60. [Crossref] [PubMed]

- Zhang FW, Zhou ZY, Wang HL, et al. Laparoscopic versus open surgery for rectal cancer: a systematic review and meta-analysis of randomized controlled trials. Asian Pac J Cancer Prev 2014;15:9985-96. [Crossref] [PubMed]

- Buell JF, Cherqui D, Geller DA, et al. The international position on laparoscopic liver surgery: The Louisville Statement, 2008. Ann Surg 2009;250:825-30. [Crossref] [PubMed]

- Chan KS, Wang ZK, Syn N, et al. Learning curve of laparoscopic and robotic pancreas resections: a systematic review. Surgery 2021;170:194-206. [Crossref] [PubMed]

- Tarallo M, Crocetti D, Fiori E, et al. Criticism of learning curve in laparoscopic adrenalectomy: a systematic review. Clin Ter 2020;171:e178-82. [PubMed]

- Dagash H, Chowdhury M, Pierro A. When can I be proficient in laparoscopic surgery? A systematic review of the evidence. J Pediatr Surg 2003;38:720-4. [Crossref] [PubMed]

- Tyras DH, Barner HB, Kaiser GC, et al. Bypass grafts to the left anterior descending coronary artery: saphenous vein versus internal mammary artery. J Thorac Cardiovasc Surg 1980;80:327-33. [Crossref] [PubMed]

- Leijte E, de Blaauw I, Van Workum F, et al. Robot assisted versus laparoscopic suturing learning curve in a simulated setting. Surg Endosc 2020;34:3679-89. [Crossref] [PubMed]

- Eyers T. Laparoscopic and other colorectal trials: ethics of the learning curve. ANZ J Surg 2017;87:859. [Crossref] [PubMed]

- Review Committee for Surgery. Defined Category Minimum Numbers for General Surgery Residents and Credit Role [Internet]. 2019. Available online: https://www.acgme.org/globalassets/definedcategoryminimumnumbersforgeneralsurgeryresidentsandcreditrole.pdf

- Reynolds FD, Goudas L, Zuckerman RS, et al. A rural, community-based program can train surgical residents in advanced laparoscopy. J Am Coll Surg 2003;197:620-3. [Crossref] [PubMed]

- Abbas P, Holder-Haynes J, Taylor DJ, et al. More than a camera holder: teaching surgical skills to medical students. J Surg Res 2015;195:385-9. [Crossref] [PubMed]

- Bohnen JD, George BC, Zwischenberger JB, et al. Trainee Autonomy in Minimally Invasive General Surgery in the United States: Establishing a National Benchmark. J Surg Educ 2020;77:e52-62. [Crossref] [PubMed]

- George BC, Bohnen JD, Williams RG, et al. Readiness of US General Surgery Residents for Independent Practice. Ann Surg 2017;266:582-94. [Crossref] [PubMed]

- Shockcor N, Hayssen H, Kligman MD, et al. Ten Year Trends in Minimally Invasive Surgery Fellowship. JSLS 2021;25:e2020. [Crossref] [PubMed]

- Palter VN, Grantcharov TP. Simulation in surgical education. CMAJ 2010;182:1191-6. [Crossref] [PubMed]

- Malangoni MA, Biester TW, Jones AT, et al. Operative experience of surgery residents: trends and challenges. J Surg Educ 2013;70:783-8. [Crossref] [PubMed]

- Brinkmann C, Fritz M, Pankratius U, et al. Box- or Virtual-Reality Trainer: Which Tool Results in Better Transfer of Laparoscopic Basic Skills?-A Prospective Randomized Trial. J Surg Educ 2017;74:724-35. [Crossref] [PubMed]

- Li MM, George J. A systematic review of low-cost laparoscopic simulators. Surg Endosc 2017;31:38-48. [Crossref] [PubMed]

- Yiasemidou M, de Siqueira J, Tomlinson J, et al. "Take-home" box trainers are an effective alternative to virtual reality simulators. J Surg Res 2017;213:69-74. [Crossref] [PubMed]

- Sankaranarayanan G, Parker L, De S, et al. Simulation for Colorectal Surgery. J Laparoendosc Adv Surg Tech A 2021;31:566-9. [Crossref] [PubMed]

- Alaker M, Wynn GR, Arulampalam T. Virtual reality training in laparoscopic surgery: A systematic review & meta-analysis. Int J Surg 2016;29:85-94. [Crossref] [PubMed]

- Frederiksen JG, Sørensen SMD, Konge L, et al. Cognitive load and performance in immersive virtual reality versus conventional virtual reality simulation training of laparoscopic surgery: a randomized trial. Surg Endosc 2020;34:1244-52. [Crossref] [PubMed]

- Van Bruwaene S, Schijven MP, Napolitano D, et al. Porcine cadaver organ or virtual-reality simulation training for laparoscopic cholecystectomy: a randomized, controlled trial. J Surg Educ 2015;72:483-90. [Crossref] [PubMed]

- Faure JP, Breque C, Danion J, et al. SIM Life: a new surgical simulation device using a human perfused cadaver. Surg Radiol Anat 2017;39:211-7. [Crossref] [PubMed]

- Jhala T, Zundel S, Szavay P. Surgical simulation of pediatric laparoscopic dismembered pyeloplasty: Reproducible high-fidelity animal-tissue model. J Pediatr Urol 2021;17:833.e1-4. [Crossref] [PubMed]

- Boza C, Varas J, Buckel E, et al. A cadaveric porcine model for assessment in laparoscopic bariatric surgery--a validation study. Obes Surg 2013;23:589-93. [Crossref] [PubMed]

- Sutton ERH, Billeter A, Druen D, et al. Development of a human cadaver model for training in laparoscopic donor nephrectomy. Clin Transplant 2017; [Crossref] [PubMed]

- Derossis AM, Fried GM, Abrahamowicz M, et al. Development of a model for training and evaluation of laparoscopic skills. Am J Surg 1998;175:482-7. [Crossref] [PubMed]

- ABS to Require ACLS, ATLS and FLS for General Surgery Certification | American Board of Surgery [Internet]. [cited 2021 Mar 17]. Available online: https://www.absurgery.org/default.jsp?news_newreqs

- Nepomnayshy D, Whitledge J, Birkett R, et al. Evaluation of advanced laparoscopic skills tasks for validity evidence. Surg Endosc 2015;29:349-54. [Crossref] [PubMed]

- Varas J, Mejía R, Riquelme A, et al. Significant transfer of surgical skills obtained with an advanced laparoscopic training program to a laparoscopic jejunojejunostomy in a live porcine model: feasibility of learning advanced laparoscopy in a general surgery residency. Surg Endosc 2012;26:3486-94. [Crossref] [PubMed]

- Quezada J, Achurra P, Jarry C, et al. Minimally invasive tele-mentoring opportunity-the mito project. Surg Endosc 2020;34:2585-92. [Crossref] [PubMed]

- Quezada J, Achurra P, Asbun D, et al. Smartphone application supplements laparoscopic training through simulation by reducing the need for feedback from expert tutors. Surg Open Sci 2019;1:100-4. [Crossref] [PubMed]

- Dickinson KJ, Gronseth SL. Application of Universal Design for Learning (UDL) Principles to Surgical Education During the COVID-19 Pandemic. J Surg Educ 2020;77:1008-12. [Crossref] [PubMed]

- Sabharwal S, Ficke JR, LaPorte DM. How We Do It: Modified Residency Programming and Adoption of Remote Didactic Curriculum During the COVID-19 Pandemic. J Surg Educ 2020;77:1033-6. [Crossref] [PubMed]

- Jarry Trujillo C, Achurra Tirado P, Escalona Vivas G, et al. Surgical training during COVID-19: a validated solution to keep on practicing. Br J Surg 2020;107:e468-e469. [Crossref] [PubMed]

- Blackhall VI, Cleland J, Wilson P, et al. Barriers and facilitators to deliberate practice using take-home laparoscopic simulators. Surg Endosc 2019;33:2951-9. [Crossref] [PubMed]

- McKendy KM, Watanabe Y, Bilgic E, et al. Establishing meaningful benchmarks: the development of a formative feedback tool for advanced laparoscopic suturing. Surg Endosc 2017;31:5057-65. [Crossref] [PubMed]

- Ten Cate O, Durning S. Peer teaching in medical education: twelve reasons to move from theory to practice. Med Teach 2007;29:591-9. [Crossref] [PubMed]

- Carter SC, Chiang A, Shah G, et al. Video-based peer feedback through social networking for robotic surgery simulation: a multicenter randomized controlled trial. Ann Surg 2015;261:870-5. [Crossref] [PubMed]

- Vaughn CJ, Kim E, O'Sullivan P, et al. Peer video review and feedback improve performance in basic surgical skills. Am J Surg 2016;211:355-60. [Crossref] [PubMed]

- Anderson TN, Lau JN, Shi R, et al. The Utility of Peers and Trained Raters in Technical Skill-based Assessments a Generalizability Theory Study. J Surg Educ 2022;79:206-15. [Crossref] [PubMed]

- Rekman JF, Alseidi A. Training for Minimally Invasive Cancer Surgery. Surg Oncol Clin N Am 2019;28:11-30. [Crossref] [PubMed]

- Kluger MD, Vigano L, Barroso R, et al. The learning curve in laparoscopic major liver resection. J Hepatobiliary Pancreat Sci 2013;20:131-6. [Crossref] [PubMed]

- Guilbaud T, Birnbaum DJ, Berdah S, et al. Learning Curve in Laparoscopic Liver Resection, Educational Value of Simulation and Training Programmes: A Systematic Review. World J Surg 2019;43:2710-9. [Crossref] [PubMed]

- Tuma F, Nassar AK. Applying Bloom's taxonomy in clinical surgery: Practical examples. Ann Med Surg (Lond) 2021;69:102656. [Crossref] [PubMed]

- Broering DC, Berardi G, El Sheikh Y, et al. Learning Curve Under Proctorship of Pure Laparoscopic Living Donor Left Lateral Sectionectomy for Pediatric Transplantation. Ann Surg 2020;271:542-8. [Crossref] [PubMed]

- de Rooij T, Cipriani F, Rawashdeh M, et al. Single-Surgeon Learning Curve in 111 Laparoscopic Distal Pancreatectomies: Does Operative Time Tell the Whole Story? J Am Coll Surg 2017;224:826-832.e1. [Crossref] [PubMed]

- Esparaz JR, Jeziorczak PM, Mowrer AR, et al. Adopting Single-Incision Laparoscopic Appendectomy in Children: Is It Safe During the Learning Curve? J Laparoendosc Adv Surg Tech A 2019;29:1306-10. [Crossref] [PubMed]

- Miskovic D, Ni M, Wyles SM, et al. Learning curve and case selection in laparoscopic colorectal surgery: systematic review and international multicenter analysis of 4852 cases. Dis Colon Rectum 2012;55:1300-10. [Crossref] [PubMed]

- Peláez Mata D, Herrero Álvarez S, Gómez Sánchez A, et al. Laparoscopic learning curves. Cir Pediatr 2021;34:20-7. [PubMed]

- Pilka R, Gágyor D, Študentová M, et al. Laparoscopic and robotic sacropexy: retrospective review of learning curve experiences and follow-up. Ceska Gynekol Fall;82:261-7.

- Reitano E, de'Angelis N, Schembari E, et al. Learning curve for laparoscopic cholecystectomy has not been defined: A systematic review. ANZ J Surg 2021;91:E554-60. [Crossref] [PubMed]

- Wang B, Son SY, Shin HJ, et al. The Learning Curve of Linear-Shaped Gastroduodenostomy Associated with Totally Laparoscopic Distal Gastrectomy. J Gastrointest Surg 2020;24:1770-7. [Crossref] [PubMed]

- Hardon SF, Horeman T, Bonjer HJ, et al. Force-based learning curve tracking in fundamental laparoscopic skills training. Surg Endosc 2018;32:3609-21. [Crossref] [PubMed]

- Halls MC, Cherqui D, Taylor MA, et al. Are the current difficulty scores for laparoscopic liver surgery telling the whole story? An international survey and recommendations for the future. HPB (Oxford) 2018;20:231-6. [Crossref] [PubMed]

- Halls MC, Alseidi A, Berardi G, et al. A Comparison of the Learning Curves of Laparoscopic Liver Surgeons in Differing Stages of the IDEAL Paradigm of Surgical Innovation: Standing on the Shoulders of Pioneers. Ann Surg 2019;269:221-8. [Crossref] [PubMed]

- Brown KM, Geller DA. What is the Learning Curve for Laparoscopic Major Hepatectomy? J Gastrointest Surg 2016;20:1065-71. [Crossref] [PubMed]

- Kuge H, Yokoo T, Uchida H, et al. Learning curve for laparoscopic transabdominal preperitoneal repair: A single-surgeon experience of consecutive 105 procedures. Asian J Endosc Surg 2020;13:205-10. [Crossref] [PubMed]

- Steiner SH, Cook RJ, Farewell VT, et al. Monitoring surgical performance using risk-adjusted cumulative sum charts. Biostatistics 2000;1:441-52. [Crossref] [PubMed]

- Gumbs AA, Hilal MA, Croner R, et al. The initiation, standardization and proficiency (ISP) phases of the learning curve for minimally invasive liver resection: comparison of a fellowship-trained surgeon with the pioneers and early adopters. Surg Endosc 2021;35:5268-78. [Crossref] [PubMed]

- Louridas M, Sachdeva AK, Yuen A, et al. Coaching in Surgical Education: A Systematic Review. Ann Surg 2022;275:80-4. [Crossref] [PubMed]

- Wakabayashi G, Cherqui D, Geller DA, et al. Recommendations for laparoscopic liver resection: a report from the second international consensus conference held in Morioka. Ann Surg 2015;261:619-29. [PubMed]

- Abu Hilal M, Aldrighetti L, Dagher I, et al. The Southampton Consensus Guidelines for Laparoscopic Liver Surgery: From Indication to Implementation. Ann Surg 2018;268:11-8. [Crossref] [PubMed]

- Ban D, Tanabe M, Ito H, et al. A novel difficulty scoring system for laparoscopic liver resection. J Hepatobiliary Pancreat Sci 2014;21:745-53. [Crossref] [PubMed]

- Kawaguchi Y, Fuks D, Kokudo N, et al. Difficulty of Laparoscopic Liver Resection: Proposal for a New Classification. Ann Surg 2018;267:13-7. [Crossref] [PubMed]

- Tanaka S, Kubo S, Kanazawa A, et al. Validation of a Difficulty Scoring System for Laparoscopic Liver Resection: A Multicenter Analysis by the Endoscopic Liver Surgery Study Group in Japan. J Am Coll Surg 2017;225:249-258.e1. [Crossref] [PubMed]

- van der Poel MJ, Huisman F, Busch OR, et al. Stepwise introduction of laparoscopic liver surgery: validation of guideline recommendations. HPB (Oxford) 2017;19:894-900. [Crossref] [PubMed]

- Martin JA, Regehr G, Reznick R, et al. Objective structured assessment of technical skill (OSATS) for surgical residents. Br J Surg 1997;84:273-8. [PubMed]

- Reznick R, Regehr G, MacRae H, et al. Testing technical skill via an innovative "bench station" examination. Am J Surg 1997;173:226-30. [Crossref] [PubMed]

- Niitsu H, Hirabayashi N, Yoshimitsu M, et al. Using the Objective Structured Assessment of Technical Skills (OSATS) global rating scale to evaluate the skills of surgical trainees in the operating room. Surg Today 2013;43:271-5. [Crossref] [PubMed]

- Vassiliou MC, Feldman LS, Andrew CG, et al. A global assessment tool for evaluation of intraoperative laparoscopic skills. Am J Surg 2005;190:107-13. [Crossref] [PubMed]

- Gumbs AA, Hogle NJ, Fowler DL. Evaluation of resident laparoscopic performance using global operative assessment of laparoscopic skills. J Am Coll Surg 2007;204:308-13. [Crossref] [PubMed]

- Hogle NJ, Liu Y, Ogden RT, et al. Evaluation of surgical fellows' laparoscopic performance using Global Operative Assessment of Laparoscopic Skills (GOALS). Surg Endosc 2014;28:1284-90. [Crossref] [PubMed]

- Hatala R, Cook DA, Brydges R, et al. Constructing a validity argument for the Objective Structured Assessment of Technical Skills (OSATS): a systematic review of validity evidence. Adv Health Sci Educ Theory Pract 2015;20:1149-75. [Crossref] [PubMed]

- Beard JD, Marriott J, Purdie H, et al. Assessing the surgical skills of trainees in the operating theatre: a prospective observational study of the methodology. Health Technol Assess 2011;15:i-xxi, 1-162. [Crossref] [PubMed]

- Glarner CE, McDonald RJ, Smith AB, et al. Utilizing a novel tool for the comprehensive assessment of resident operative performance. J Surg Educ 2013;70:813-20. [Crossref] [PubMed]

- van Zwieten TH, Okkema S, Kramp KH, et al. Procedure-based assessment for laparoscopic cholecystectomy can replace global rating scales. Minim Invasive Ther Allied Technol 2022;31:865-71. [Crossref] [PubMed]

- Datta V, Mackay S, Mandalia M, et al. The use of electromagnetic motion tracking analysis to objectively measure open surgical skill in the laboratory-based model. J Am Coll Surg 2001;193:479-85. [Crossref] [PubMed]

- Taffinder N, Sutton C, Fishwick RJ, et al. Validation of virtual reality to teach and assess psychomotor skills in laparoscopic surgery: results from randomised controlled studies using the MIST VR laparoscopic simulator. Stud Health Technol Inform 1998;50:124-30. [PubMed]

- Kantamaneni K, Jalla K, Renzu M, et al. Virtual Reality as an Affirmative Spin-Off to Laparoscopic Training: An Updated Review. Cureus 2021;13:e17239. [Crossref] [PubMed]

- Stahl CC, Minter RM. New Models of Surgical Training. Adv Surg 2020;54:285-99. [Crossref] [PubMed]

- Xue D, Bo H, Zhang W, et al. Application of competency-based education in laparoscopic training. JSLS 2015;19:e2014. [Crossref] [PubMed]

Cite this article as: Brian R, Davis G, Park KM, Alseidi A. Evolution of laparoscopic education and the laparoscopic learning curve: a review of the literature. Laparosc Surg 2022;6:34.